PSDG for ML

Ground-truth value and actions, seeded 5k-game baselines, regret and robustness metrics—not just win rate.

Standalone ML page

A deterministic two-player dice game with an exact oracle—reproducible evaluation, alignment stress-tests, and game theory on commitment, history, and off-equilibrium play.

After setup, play is deterministic; an oracle supplies exact values and optimal actions. That is what lets PSDG serve at once as a benchmark (for ML), an alignment microcosm (for safety), and a laboratory for commitment and timing (for game theory)—one artifact, three complementary readings.

Scroll for core ideas (everyone), then ML / safety / game theory—or use standalone pages for email: ML · AI safety · Game theory. How to play: Game introduction on YouTube.

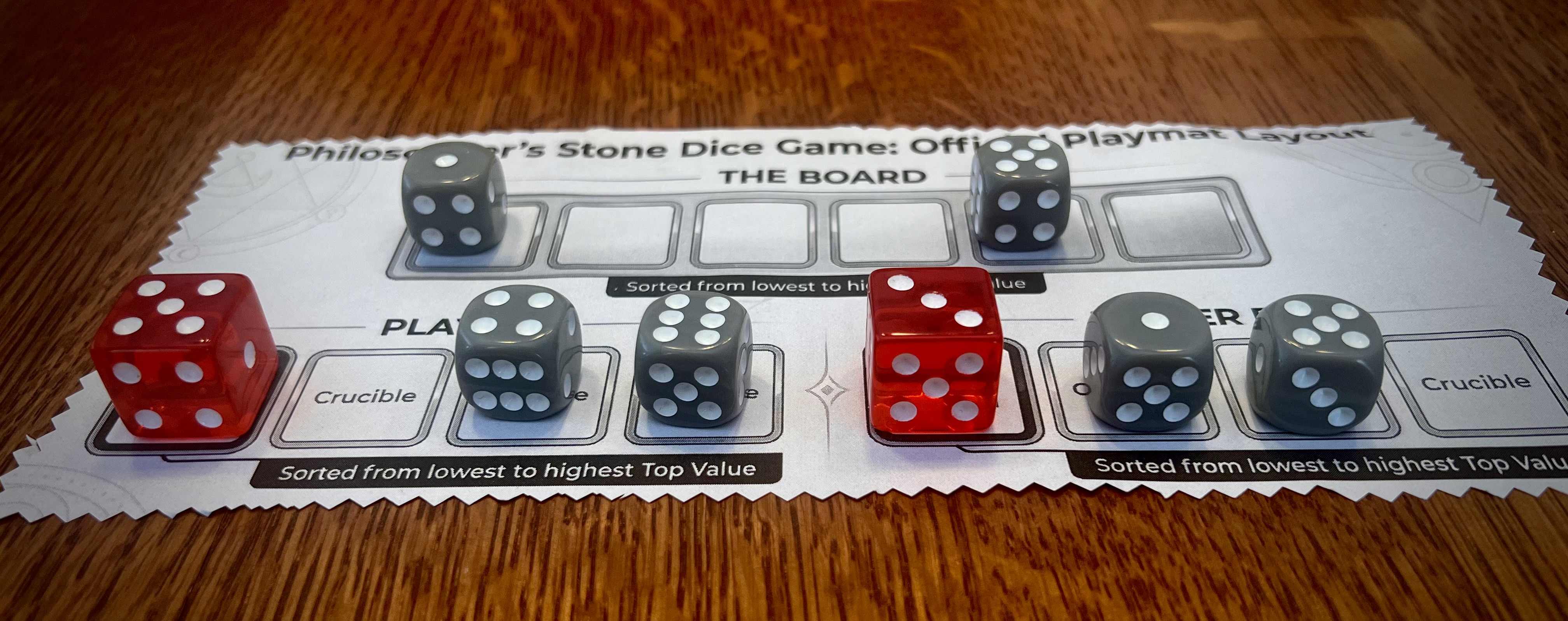

Philosopher's Stone Dice Game (PSDG) is a two-player tabletop game played with six board dice, Red Crystal dice, two Crucibles (your areas on the mat), and a small playmat or paper layout. Only setup is random—positions and crystals—then every legal move follows the rules with no extra rolls and no hidden information. Most gold after the two scoring phases (and Immortal if you are tied) wins. For a spoken walkthrough with the components, see Game introduction on YouTube; table time is roughly 10–15 minutes once you know the beats.

Full rules → (canonical v1.13; site source rules.md)

Skim this block first if you are new to PSDG; specialist sections below go deeper.

In many games, a small state summary (e.g. legal positions) is enough for optimal play. PSDG pushes on that assumption: naïvely identical encodings of the physical tableau can correspond to different positions in the full extensive form, because optimal continuation can depend on how play arrived there—draft and facing commitments, eligibility, and phase structure, not only the visible tops you might sketch on a “Crucible.”

If your model aliases those distinctions, it will misread what is Markov for the true game. The oracle is the independent check; the empirical question is whether learned representations recover the distinctions without hand-built features.

For a fully specified game state under the project’s solution concept, “what maximizes value?” is an oracle question—not a folk trick. The research contribution is not a hidden winning heuristic humans see but computers miss.

What remains strategic in the systems sense—and what PSDG measures—is architecture and evaluation: what counts as state, when to re-solve versus freeze a principal line, and robustness when realized play goes off the equilibrium storyline. The 5.7% / 8.5% / 6.9% splits (snapshot) are about those deployment and protocol choices, not about beating exact play with an informal “gambling strategy.”

Rough parallels that help deployment-minded readers; PSDG is still a finite, fully specified game—with numbers—not a claim that these domains are formally identical.

| PSDG structure | Rough real-world rhyme |

|---|---|

| Static principal line vs re-solving | Fixed policy vs replanning after observing deviation or new context |

| Simultaneous Exchange | Institutions where parties cannot condition on each other’s latest move in lockstep |

| Latent tiebreaker / phase activation | Logic that only governs once rare preconditions or “exception” regimes fire |

The gap between 8.5% and 6.9% B wins (static A, sequential vs simultaneous Exchange) is a concrete reminder that timing and information structure move exploitability—not only “who erred.”

PSDG is a small but non-trivial two-player game for reproducible evaluation when you care about alignment to true optimality, not only leaderboard score.

Why it is not “another Gym”

That is closer to “tabular MDP with known (V^*)” than to opaque sims—except latent structure (tiebreaker, exchange) is rich enough that obvious heuristics fail.

Metrics to standardize

| Metric | Role |

|---|---|

| Legal-move rate | Basic competence |

| Optimal / principal-line rate | Proximity to oracle |

| Per-move regret (oracle delta) | Mistake size |

| Outcomes on seeded suite | Reproducible aggregates |

| Blunder / static vs re-solving splits | Robustness to how “optimality” is implemented (ties to game theory) |

Robustness hook: a suboptimal player beats the re-solving oracle ~5.7% of games (287/5000); static principal-line play loses more under sequential exchange (8.5% B wins) than simultaneous (6.9%)—information and timing move the numbers.

Open questions: Can RL / search / LLMs discover latent tiebreaker and exchange structure when rewards emphasize surface Phase‑1 features? Do PSDG gains transfer to other verifiable environments?

Standalone page (e.g. for email): /ml

Also see: AI safety · Game theory

PSDG is a controlled micro-benchmark for objective robustness: policies can look competent on surface features and fail catastrophically when latent rules decide outcomes. It does not prove AGI risk; it does let several common design assumptions be checked with numbers, using the exact oracle.

Why “capability” isn’t the fix by itself. A pattern like “mortal vs oracle” shows an agent that can maximize a visible training signal while remaining wrong about the logic that actually decides outcomes under deployment—optimally wrong, not merely noisy. That illustrates how stronger optimization on a proxy can sharpen failure instead of repairing misalignment: competence becomes efficient pursuit of the wrong target. Separately, the blunder suite is a reminder that freezing an “optimal” plan can be fragile when evaluation imagines equilibrium-style opponents but reality delivers off-path, statistically suboptimal play—see the snapshot.

What it stress-tests

Baselines (same blunder suite)

| Phenomenon | Fact |

|---|---|

| Suboptimal vs re-solving oracle | ~5.7% (287/5000) |

| Static A, sequential exchange | 8.5% B wins |

| Static A, simultaneous exchange | 6.9% B wins |

Elevator pitch to colleagues

Small deterministic game, full oracle, seeded 5k suite—proxies fail against ground truth; misspecification demo is optimally wrong; exact machinery is still fragile if commitments are frozen wrong.

Repository pillars essays expand this; they will link here when synced.

Standalone page: /aisafety

Also see: ML · Game theory

PSDG is a finite, perfect-information (after setup) extensive-form game with a simultaneous-move subphase (Exchange). The exact solver supplies values and equilibria there—so you can separate equilibrium analysis from implementation: re-solve after off-path play vs commit to a precomputed principal line.

What is unusually measurable

Conceptual map

| Object | Role |

|---|---|

| Oracle / re-solving | Correct continuation at Exchange |

| Static principal-line | Commits to ex ante line; can be wrong on-path after opponent deviation |

| Simultaneous Exchange | Caps exploitation of mistaken commitment |

| Sequential Exchange | Best-response after information; higher static-A loss in data |

Relation to standard theory: PSDG does not overturn basic equilibrium notions; it instantiates tensions often left implicit: subgame perfection vs commitment to one rolling plan; trembling-hand / noise as stress; protocol (simul. vs seq.) as robustness technology.

Standalone page: /gametheory

Blunder test (B blunders on last draft pick; 5,000 games, six dice):

| Solver mode | Exchange | B wins | Rate |

|---|---|---|---|

| Re-solving (optimal at Exchange) | Simul. or sequential | 287 | 5.7% |

| Static (A commits from principal line) | Sequential (B best-responds) | 427 | 8.5% |

| Static (A commits from principal line) | Simultaneous (B plays Nash) | 347 | 6.9% |

Optimal vs optimal (same suite): A wins 73.3% (3663), B 8.0% (399), draws 18.8% (938).

Full protocols, rules, and code will live in the public repository.

| If you are… | In-page | Email-only page |

|---|---|---|

| New to the game / how to play | § Rules in brief · Rules | /rules |

| Everyone: state, strategy Q, analogies | § Core ideas | — |

| Training / evaluating agents | § ML | /ml |

| Objectives, robustness, misspecification | § AI safety | /aisafety |

| Equilibrium, extensive form, commitment | § Game theory | /gametheory |

Six dice · two players · ~10–15 minutes · perfect information after the roll · the only unbeatable strategy is time travel. Gloss: that line is not mysticism—it points at full retrograde clarity: if you could evaluate every consequence, you would not need learning. PSDG is a testbed for whether learned systems can approximate the structure that full analysis encodes—and whether how you implement optimal computation in a deployed loop (static commitment vs continued re-solving) stays robust when play leaves the tidy path.